No longer is assessment primarily for grading students on how well they have learnt what they have been taught.

Assessment is now about identifying what learners know, understand and can do – in varying degrees of detail – to provide better evidence for educational decision making.

ACER is at the forefront of reform and innovation in assessment theory and practice

Thinking differently

about assessment

Sharing collective knowledge and experience.

ACER regularly holds conferences that bring together experts in educational assessment.

Resources for schools

Assessment of general capabilities

A frame of reference for teaching, learning and assessing the development of ‘21st century’ skills in collaboration, critical thinking and creative thinking.

Learning Progression Explorer

An online tool that enables education stakeholders to explore evidence-based representations of growth in key learning areas.

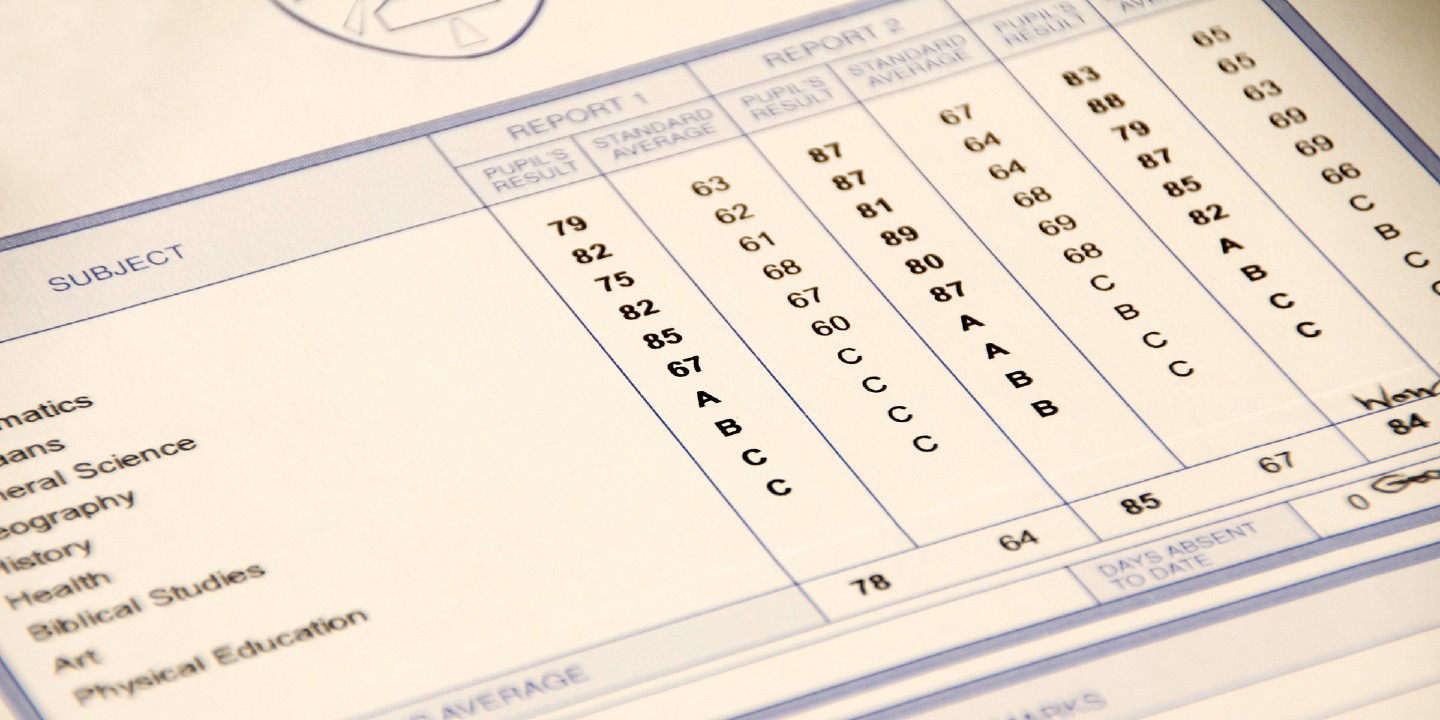

Communicating student learning progress

This project investigated alternatives to the traditional ‘school report’ as a way of communicating the progress students make in their learning.

Mapping the assessment reform journey

Follow two schools’ assessment reform experiences through interactive timelines featuring recorded interviews with key staff, together with artefacts of the reform process such as meeting agendas, protocols and presentations.